The AI headlines aren’t wrong—they’re incomplete. The real risk for many knowledge workers isn’t pink slips; it’s a psychology gap we can actually close.

I’m tracking recent week’s headlines because the negative spiral is real. In higher education and other mission-driven settings, those headlines land hard: we’re asked to do more with less, safeguard quality and equity, and steward communities that value human connection. It’s natural that increasing talk about AI can feel like a threat rather than a help. But a new Harvard Business Review article argues that adoption lives or dies on psychology, not hype. When workers’ needs for competence, autonomy, and connection are frustrated, they resist—sometimes hard.

One stat stopped me: 31% of knowledge workers (and 41% of Gen Z) say they’ve actively worked against their company’s AI initiatives, and 32% hide their AI use. That’s not just sabotage—it’s data. In higher ed, museums, libraries, and government, where budgets are tight and missions matter, “shadow AI” looks less like misconduct and more like unmet needs. People want to feel capable. They want a say in how their work changes. They want to learn with trusted colleagues, not alone in a browser tab. If those needs aren’t met, resistance is a natural human response.

Here are a few takeaways from HBR’s piece that stood out:

- Adoption hinges on three psychological needs—competence, autonomy, relatedness. When those are met, workers see AI as a copilot; when they’re threatened, resistance spikes.

- The gap is real: leaders/managers report far higher gen‑AI use than workers. Meanwhile, 31% have worked against AI initiatives and 32% hide their AI use—signals to diagnose, not punish.

- Mandates undermine autonomy; they also push experimentation underground (“shadow AI”). Treat resistance as data about unmet needs.

- Training is shallow for most. One‑size‑fits‑all webinars don’t stick; peer AI champions, hands‑on playgrounds, and role‑specific learning build skill and confidence.

- Redesign end‑to‑end workflows, don’t just deploy tools. Automate simple/repetitive steps; augment the rest so humans keep judgment, context, and care.

- Empowerment and inclusion—transparency on how roles may evolve, broad access, and worker participation—drive durable engagement.

Want to try something this week from the authors’ AWARE framework?

- Acknowledge (30 minutes): Ask your team which tasks feel most threatened by AI—and why. Name the fear. Surface concerns; don’t suppress them. In a department meeting, invite 2–3 voices from different roles (faculty support, student services, operations) to share what they value and what they worry could be lost. Write it up and reflect it back.

- Watch (with curiosity): Look for task avoidance and “shadow AI.” Ask, “What would make this safe to try in the open?” instead of leading with policy. If someone is quietly using a tool to draft emails faster, explore what’s working and what quality checks they use. Turn that learning into shared practice rather than a reprimand.

- Align & Redesign (one small step): Offer a one-hour “AI playground” with a trusted peer activator. Share prompts. Swap wins and fails. No deliverables. Pair this with a simple, opt-in “use case shelf”: a living document of tasks where AI helps (transcripts summarization, policy drafts) and tasks where it should not be used (sensitive student communications, original research grading), including the why.

- Empower (with transparency): Be clear about where experimentation is encouraged, where guardrails apply, and how feedback will shape the next iteration. Invite volunteers from different units to co-own the rollout and share stories back to the group.

Two truths can sit together: AI can reduce drudgery and it can be maddeningly uneven. It’s not a reason to cut teams, and it won’t do our meaning-making for us. The messy thinking—sitting with conflicting inputs, weighing tradeoffs, understanding culture—all of these still matter, maybe more now. In universities and public-service organizations, that’s our core strength.

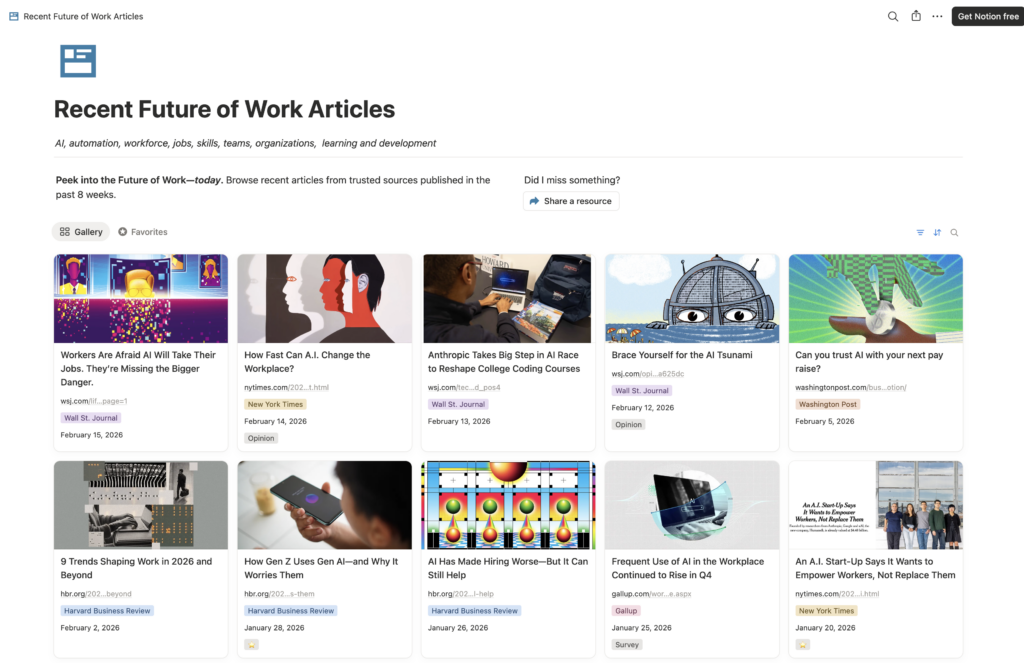

If you’re tracking the signal through the noise, I’m maintaining an AI-at-Work tracker (rolling 4 weeks + full archive across major outlets): https://repivot.notion.site/news/ It’s a quick way to see the range—from anxiety to real-world experiments—without getting lost in the extremes.

❇️ My hope: we give ourselves permission to pause long enough to design this well. When we do, the tools become less threatening and more useful, and the work feels a little more human—not less. Make this your repivot best practice.

Sources: Hermann, Puntoni, Morewedge. “Why Gen AI Feels So Threatening to Workers.” Harvard Business Review, Mar/Apr 2026.